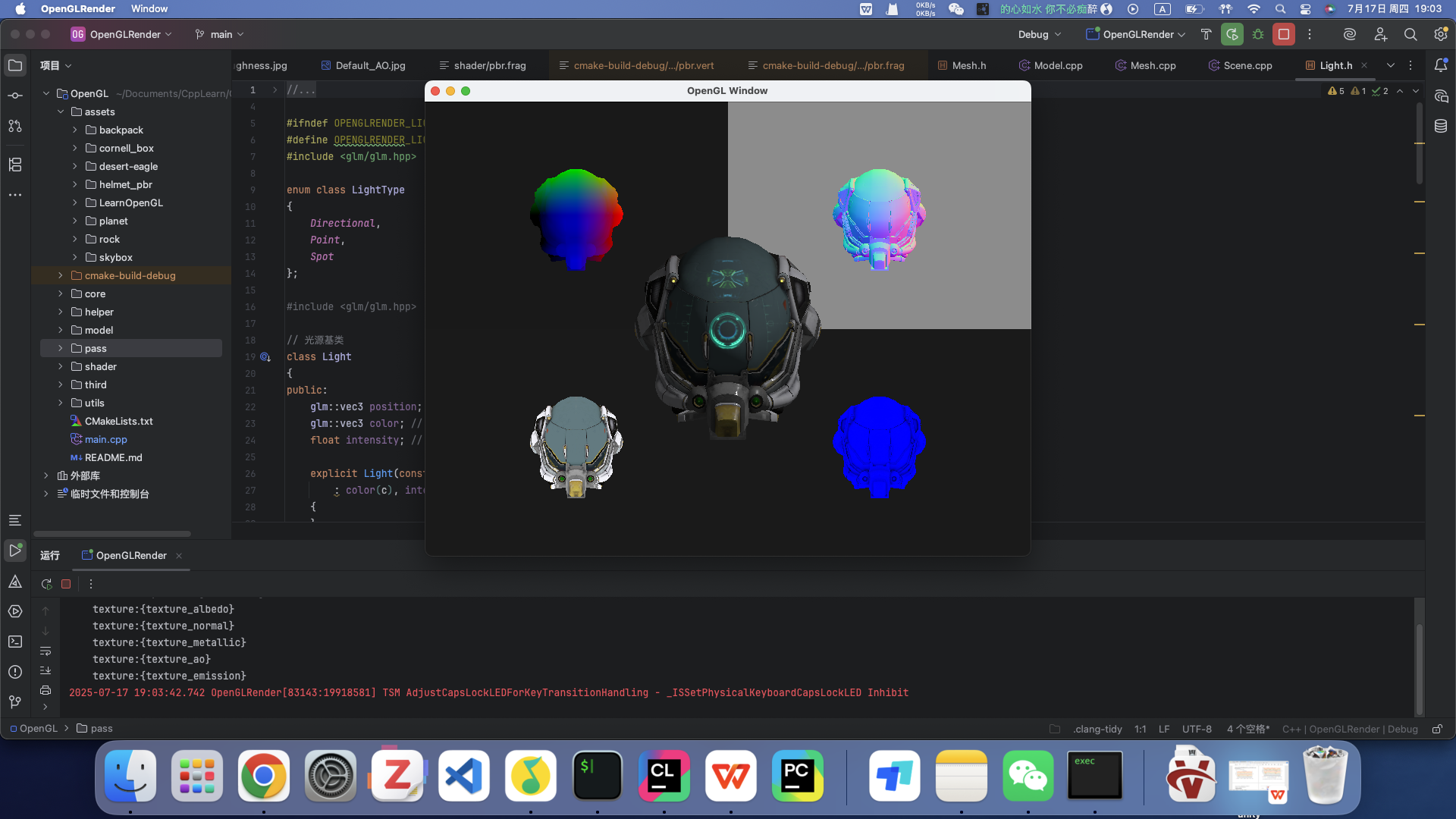

已实现功能

前边基础架构部分就不专门写了。这里展示一下已有的功能

- 延迟渲染管线

- G-Buffer的可视化调试

- PBR材质直接光照渲染 下面开始研究IBL在OpenGL中的实现

理论准备

基于图像的光照(Image based lighting, IBL)将周围环境整体视为一个大光源。IBL 通常使用立方体贴图的每个像素视为光源

在IBL中,不只有直接光照对着色点有贡献,而是四面八方的环境光都有贡献。之前总觉得为什么渲染方程中有积分,为什么在shader中没见积分运算,原来是它只是一个理想状态,要计算所有方向的光照是很困难的,给定任何方向向量 wi,我们需要一些方法来获取这个方向上场景的辐射度,并且需要实时计算积分。可以使用蒙特卡洛采样来近似积分值,但IBL并不是这样。说实话听YLQ讲,越听越是迷糊。

下面看看IBL是如何避免积分运算的

避免积分运算

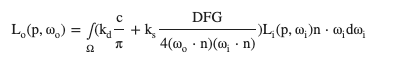

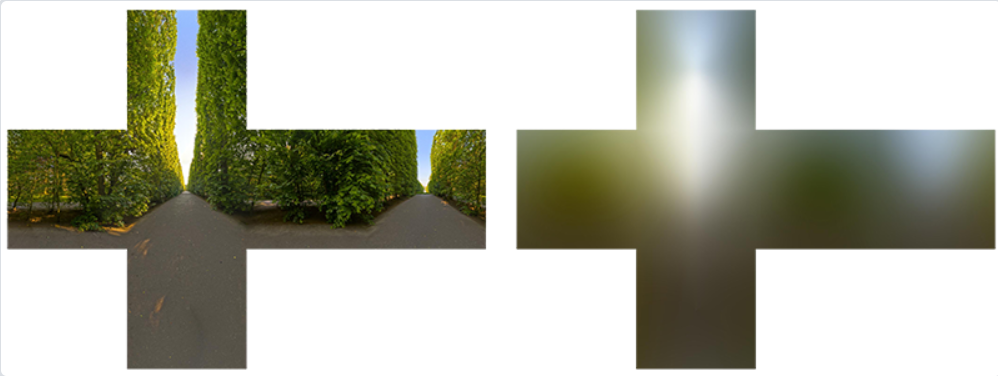

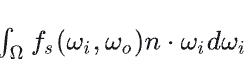

首先渲染方程的形式如下,已经被分成了漫反射和镜面反射两部分

进一步把+号拆开,可以拆成两个积分

进一步把+号拆开,可以拆成两个积分

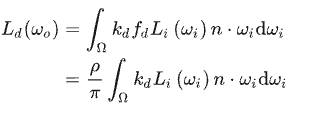

漫反射部分

首先来看漫反射部分,这里用的BRDF是Lambertian模型,它是一个常数,所以可以直接提出去,分子代表颜色

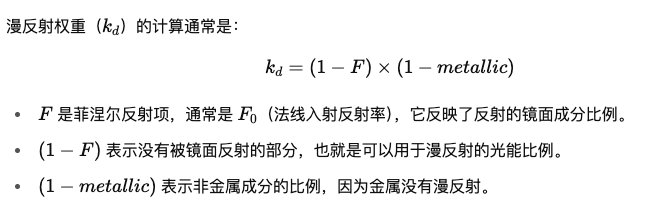

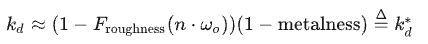

另外kd的计算,基本原理就是先算出漫反射的比例,再进一步去除金属度的影响(金属没有漫反射),为什么这样设计,我查AI应该是迪士尼的论文提出的。

另外kd的计算,基本原理就是先算出漫反射的比例,再进一步去除金属度的影响(金属没有漫反射),为什么这样设计,我查AI应该是迪士尼的论文提出的。

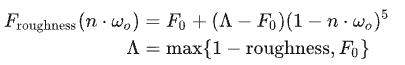

kd与光线方向有关系(因为F的计算需要wi),所以不能移出积分,做一个近似操作,本来F需要wi和半程向量来计算,改为用摄像机观察方向w0和法线方向来近似,这样就可以挪出去了

kd与光线方向有关系(因为F的计算需要wi),所以不能移出积分,做一个近似操作,本来F需要wi和半程向量来计算,改为用摄像机观察方向w0和法线方向来近似,这样就可以挪出去了 其中

其中

(F0表示垂直入射时的反射率)

(F0表示垂直入射时的反射率)

最终

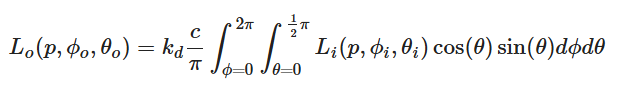

积分内部就只有光源和cos了。 到现在对于不同的法线方向n,就可以预计算一个积分值。

也就是说这张贴图存储的是不同法线方向上的积分值

这样分析半天,其实从理论上想,也应该这样,我不管从什么方向上看(即不同的w0,irrandance是方向无关的)当我观察一个点时,环境贴图对他的贡献都是它法线为中心形成的半球上的光对他的贡献之和(因为是漫反射)

积分内部就只有光源和cos了。 到现在对于不同的法线方向n,就可以预计算一个积分值。

也就是说这张贴图存储的是不同法线方向上的积分值

这样分析半天,其实从理论上想,也应该这样,我不管从什么方向上看(即不同的w0,irrandance是方向无关的)当我观察一个点时,环境贴图对他的贡献都是它法线为中心形成的半球上的光对他的贡献之和(因为是漫反射)

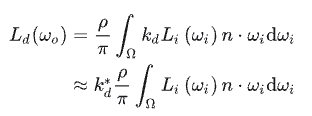

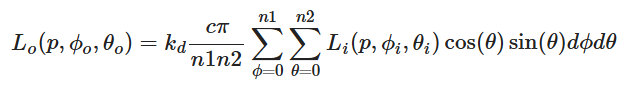

因此我们可以预计算这个积分值,得到一个 cubemap,称为 irradiance map。积分方法就是蒙特卡洛积分,我们可以简单的在半球面上均匀采样。下面给出learnOpenGL的采样方案

首先改为球面坐标系

用黎曼积分来近似

用黎曼积分来近似

注意 实际场景中 = 多个局部环境(多个“局部场景”)

在全局场景下:

每个区域(Probe volume)都有自己的局部环境 map;

然后通过探针插值(或者 voxel GI)做出空间连续的光照过渡。

比如室内室外的map肯定得不一样才对,即使法线方向一致。 这就是为什么要用探针

注意 实际场景中 = 多个局部环境(多个“局部场景”)

在全局场景下:

每个区域(Probe volume)都有自己的局部环境 map;

然后通过探针插值(或者 voxel GI)做出空间连续的光照过渡。

比如室内室外的map肯定得不一样才对,即使法线方向一致。 这就是为什么要用探针

镜面反射部分

这部分比较夸张,与w0、法线方向、以及BRDF中各种参数有关,即使一个方向向量用球面坐标系,也有9个因素。所以不能直接预计算。

首先基本思路是蒙特卡罗积分,但是当前采样方法实时太慢

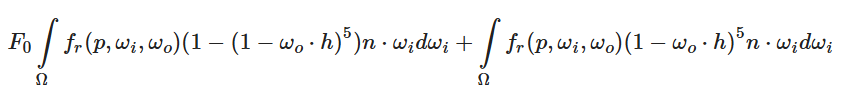

Epic提出了很好的解决方案:分割求和近似法(split sum approximation)

这部分比较夸张,与w0、法线方向、以及BRDF中各种参数有关,即使一个方向向量用球面坐标系,也有9个因素。所以不能直接预计算。

首先基本思路是蒙特卡罗积分,但是当前采样方法实时太慢

Epic提出了很好的解决方案:分割求和近似法(split sum approximation)

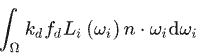

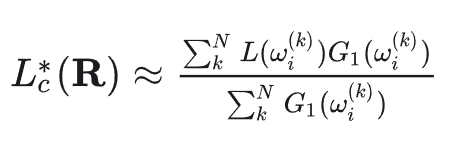

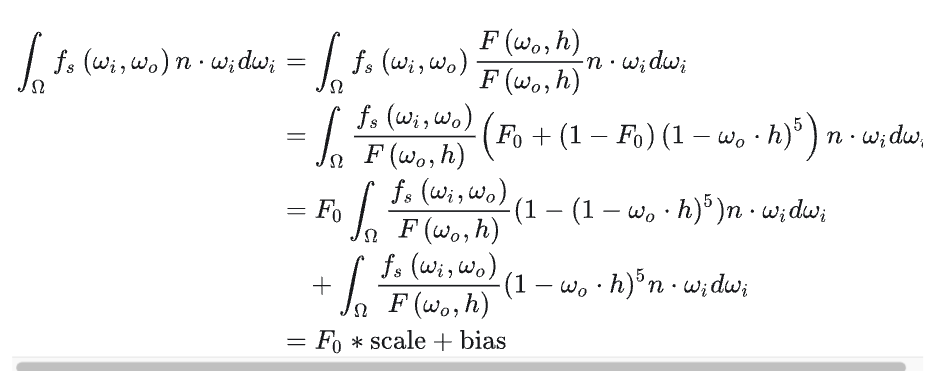

首先思路是把积分中的光照项提出来

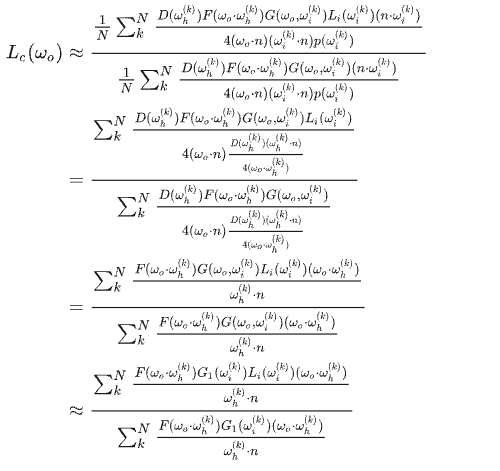

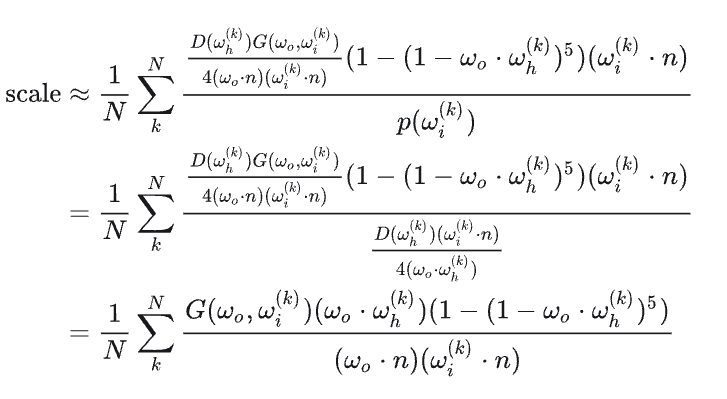

如果想求解提出来的这一项Lc(wo)是什么,就需要进行蒙特卡洛积分运算,运算通过法线分布函数进行采样化简,化简过程如下(本质是把法线分布函数的PDF求出来带进去,进行分子分母化简,得到最终结果)

如果想求解提出来的这一项Lc(wo)是什么,就需要进行蒙特卡洛积分运算,运算通过法线分布函数进行采样化简,化简过程如下(本质是把法线分布函数的PDF求出来带进去,进行分子分母化简,得到最终结果)

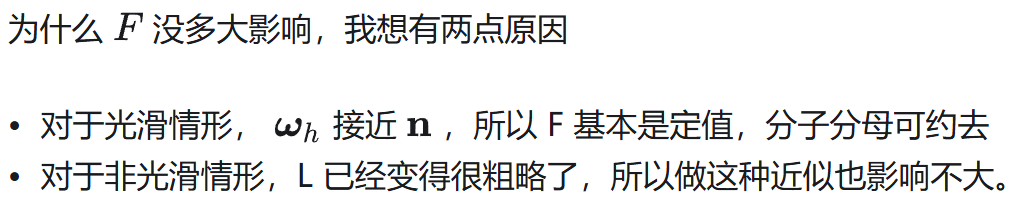

进一步近似,F对结果影响不大,直接去掉了

进一步近似,F对结果影响不大,直接去掉了

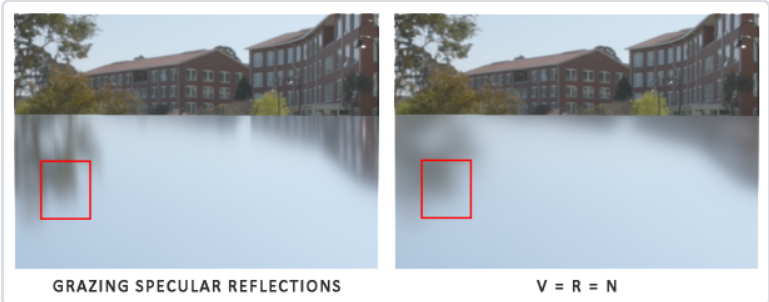

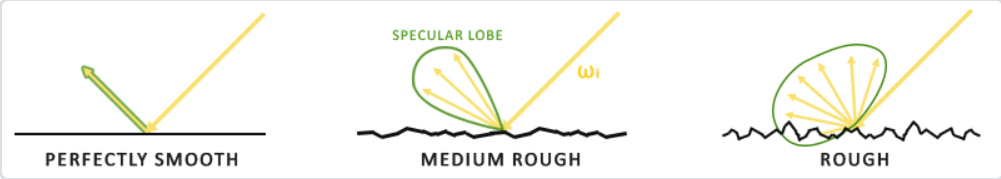

再近似,把w0和法线方向都近似成R(反射方向),这样预计算就与观察无关了(把摄像机方向、法线方向全部换成反射方向R来计算),这样处理会让掠射角处理出问题

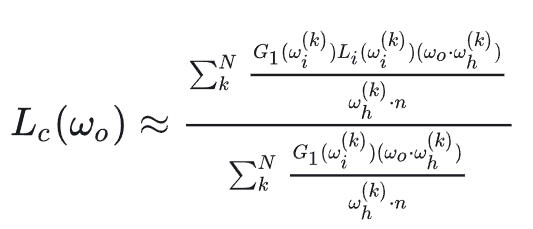

总结来说就是利用了各种近似手段把这个积分拆成了两部分,第一部分放在坐标,剩余放在右边,通过各种化简近似出第一部分是什么,然后放回原式

第一部分的预计算其实就是在以反射方向为中心,整个半球的光照进行积分的预计算,但是需要通过粗糙度来进行mipmap,因为各种近似的前提是法线分布函数,他是由粗糙度参与控制的,越粗糙的表面,越要用level更高的mipmap

总结来说就是利用了各种近似手段把这个积分拆成了两部分,第一部分放在坐标,剩余放在右边,通过各种化简近似出第一部分是什么,然后放回原式

第一部分的预计算其实就是在以反射方向为中心,整个半球的光照进行积分的预计算,但是需要通过粗糙度来进行mipmap,因为各种近似的前提是法线分布函数,他是由粗糙度参与控制的,越粗糙的表面,越要用level更高的mipmap

第一部分通过在法线分布函数上进行采样蒙特卡洛积分进行预计算

下面看第二部分

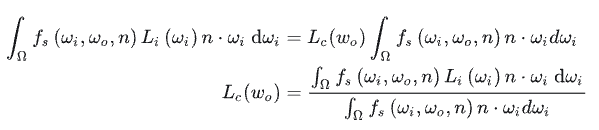

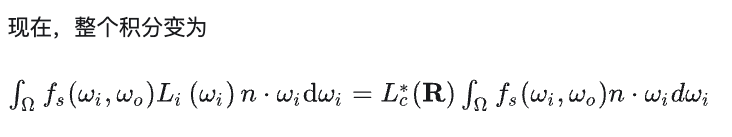

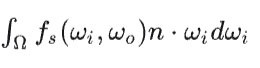

,他的参数包括w0,n,F0(通过金属度和albedo决定)和粗糙度。 F0是常数,看看怎么挪出去

,他的参数包括w0,n,F0(通过金属度和albedo决定)和粗糙度。 F0是常数,看看怎么挪出去

又是一个拆分,把积分拆成两项(这里就是拆加法,没有近似),然后把F0挪出去,得到关于scale和bias两项的计算

这两项用法线分布函数进行蒙特卡洛积分,可以抵消很多项。拆掉F0后,积分结果只和cos和粗糙度了,用一个2D纹理的两个通道存储结果即可。

又是一个拆分,把积分拆成两项(这里就是拆加法,没有近似),然后把F0挪出去,得到关于scale和bias两项的计算

这两项用法线分布函数进行蒙特卡洛积分,可以抵消很多项。拆掉F0后,积分结果只和cos和粗糙度了,用一个2D纹理的两个通道存储结果即可。

这个预计算部分叫做LUT,这个 LUT 是由 BRDF 决定的,所以确定的 BRDF 就有确定的 LUT。

这个预计算部分叫做LUT,这个 LUT 是由 BRDF 决定的,所以确定的 BRDF 就有确定的 LUT。

OpenGL实现

HDR与cubemap

在 PBR 渲染管线中考虑高动态范围(High Dynamic Range, HDR)的场景光照非常重要。由于 PBR 的大部分输入基于实际物理属性和测量,因此为入射光值找到其物理等效值是很重要的 第一步:加载HDR图片,存储为纹理

|

|

第二步:从纹理到立方体贴图 顶点着色器传递世界坐标

|

|

片段着色器,在HDR图上进行采样

|

|

要将等距柱状投影图转换为立方体贴图,我们需要渲染一个(单位)立方体,并从内部将等距柱状图投影到立方体的每个面,先创建一张立方体贴图

|

|

对同一个立方体渲染六次,每次面对立方体的一个面,并用帧缓冲对象记录其结果

|

|

下面正式进入转换

|

|

还可以用这个立方体贴图来渲染天空盒 shader部分

|

|

|

|

代码部分

|

|

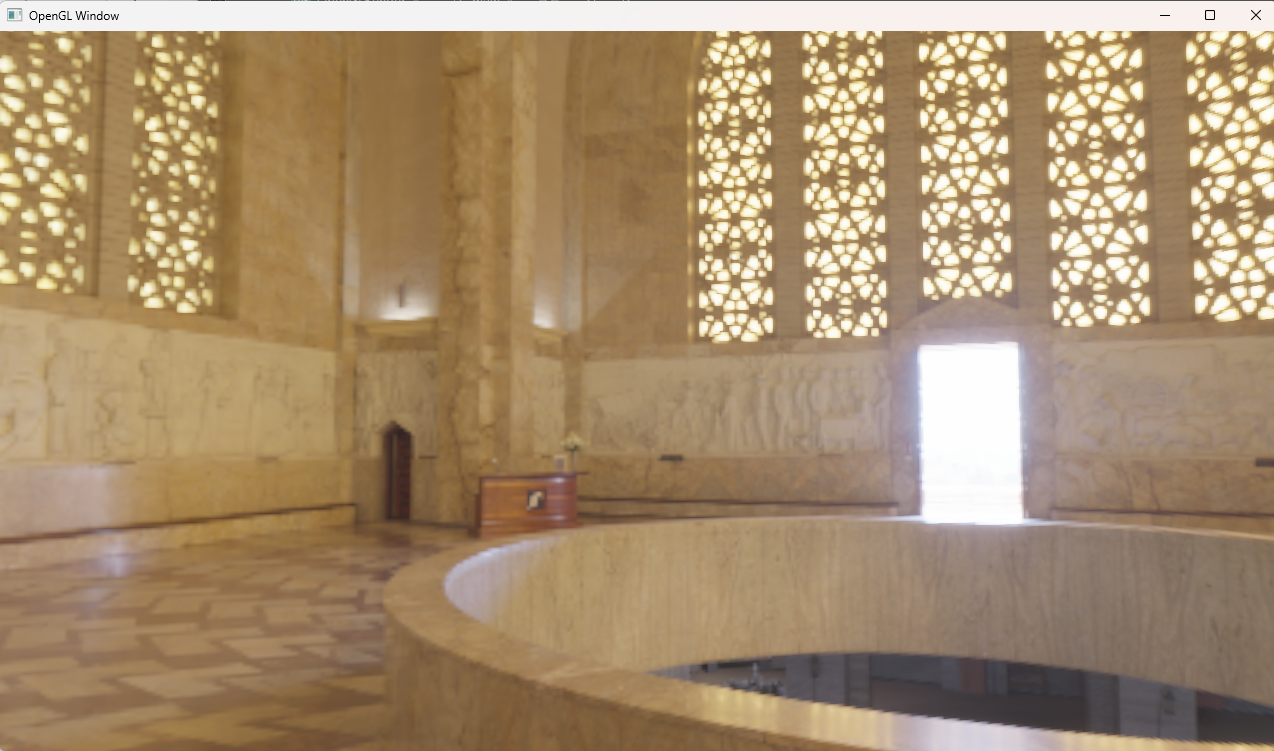

到这里就得到了立方体贴图和天空盒渲染

irradance Map

预计算对于每个方向来说对半球进行积分的结果,当计算好后,每次需要漫反射,就可以通过法线方向得到漫反射值

|

|

这一步的做法与生成cubemap的做法一致,只是片段着色器不一致。由于辐照度图对所有周围的辐射值取了平均值,因此它丢失了大部分高频细节,所以我们可以以较低的分辨率(32x32)存储,我可以把它渲染成天空盒来看看,基本没有场景信息了,所以不需要高分辨率

|

|

具体的shader

|

|

|

|

prefilterMap

先创建一张cubemap

|

|

需要的shader,渲染了5层的mipmap,使用法线分布函数进行重要性采样,预计算每个方向上的积分值

|

|

渲染代码

|

|

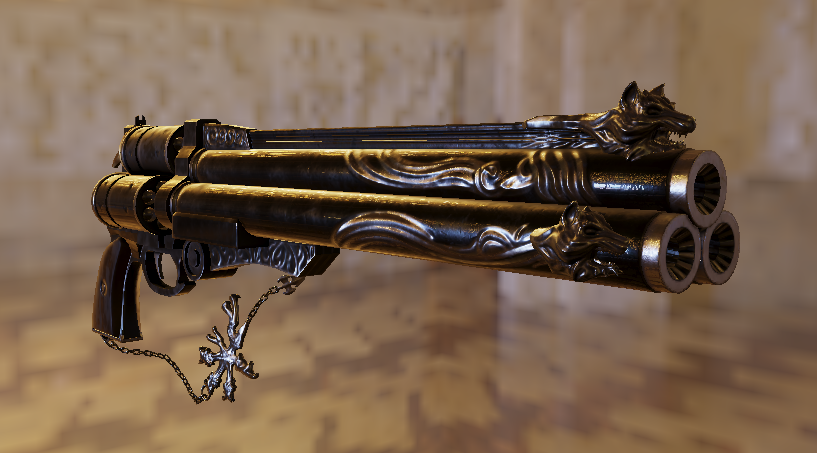

用prefilterMap渲染天空盒的结果

LUT

现在渲染方程就剩最后一部分积分的预计算了

通过一系列变换变成了

通过一系列变换变成了

这个式子只和观察方向与法线的夹角以及粗糙都有关,所以用一张2D贴图存储,采样计算积分仍然使用GGX重要性采样

这个式子只和观察方向与法线的夹角以及粗糙都有关,所以用一张2D贴图存储,采样计算积分仍然使用GGX重要性采样

|

|

|

|

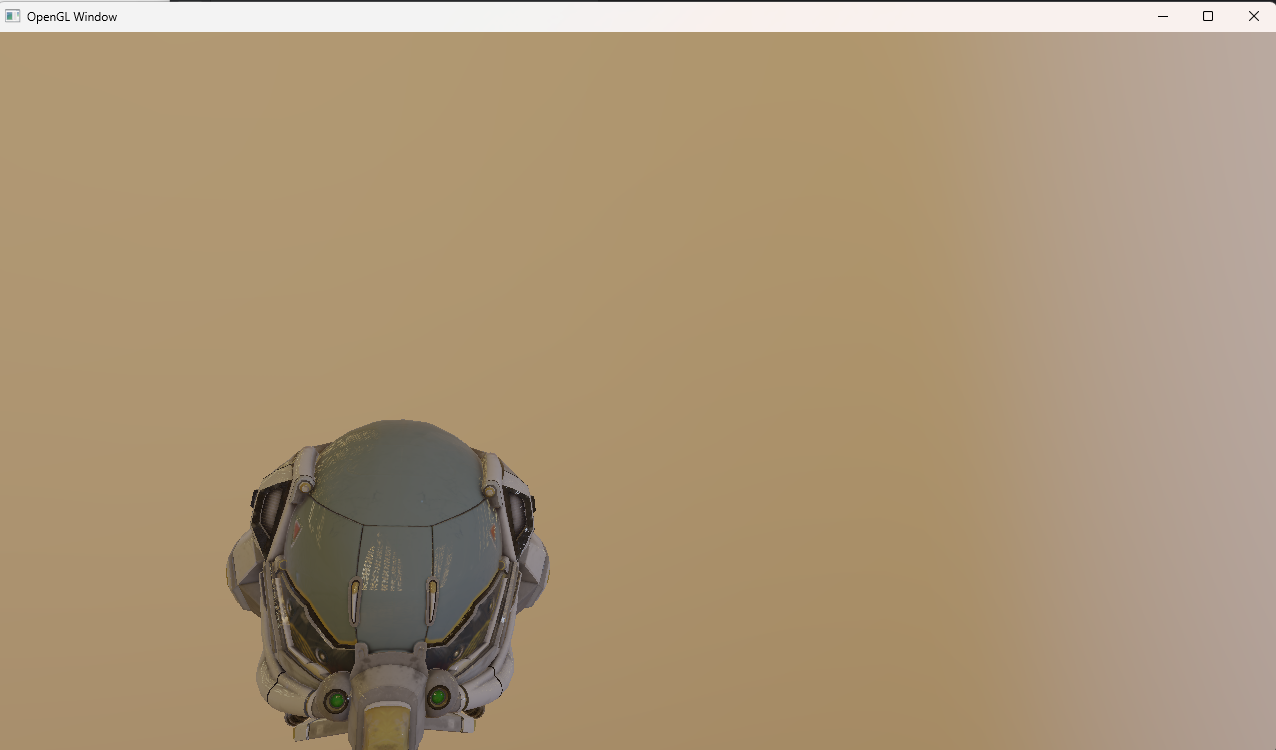

整合

到这里就以及有了所有预计算贴图,下面就是把这些贴图整合到PBR渲染管线,下面给出一个支持一个光源的IBL+PBR渲染Shader

|

|

效果展示

切换为ACES Filmic Tone Mapping

切换为ACES Filmic Tone Mapping

|

|