总体流程

- 探针收集光照信息:探针向四面八方发射256条光线,计算击中点的光照并存储 (此处可以额外计算击中点的间接光照,来模拟无限弹射的全局光照效果),另外还要存储hitT用来评估可见性

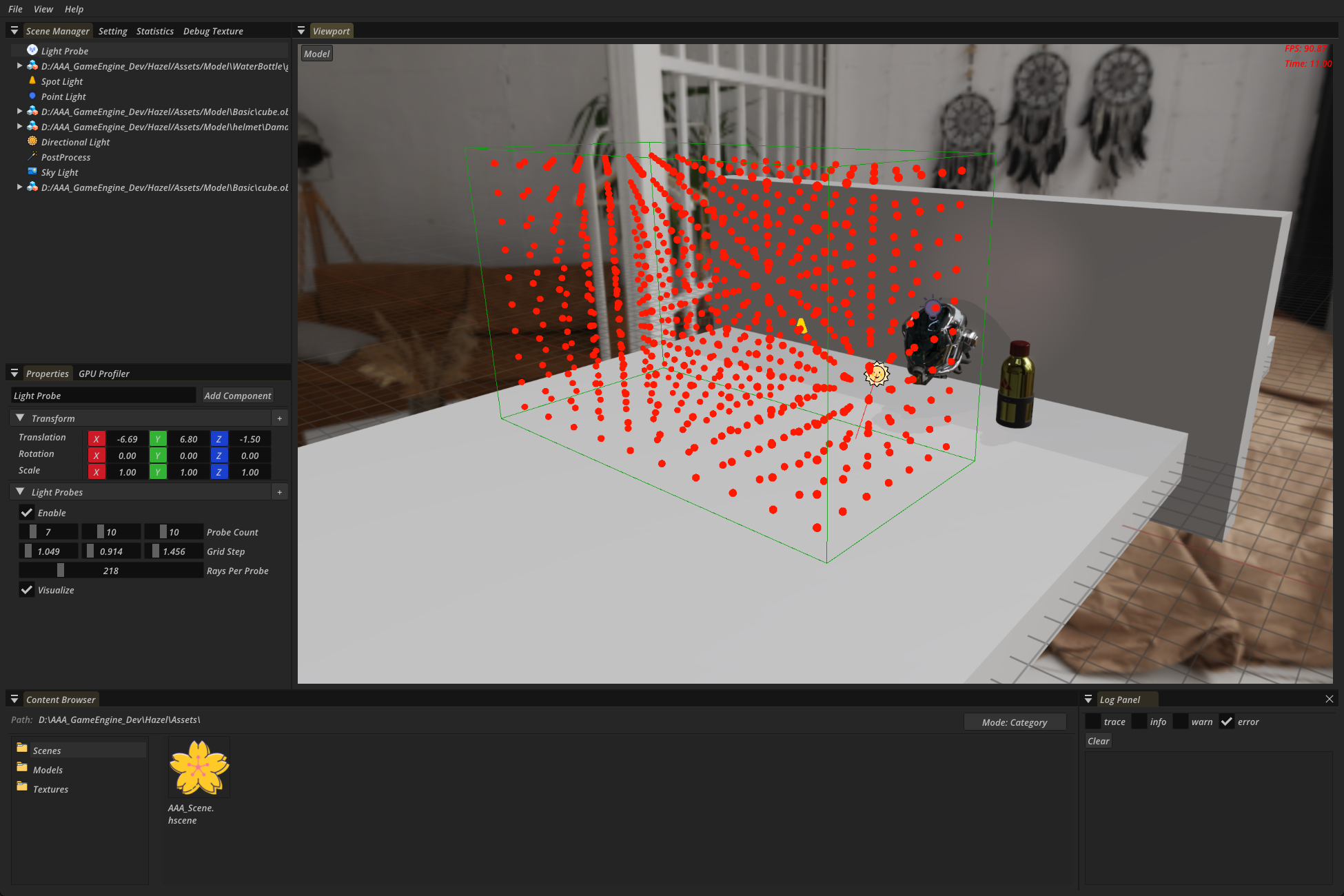

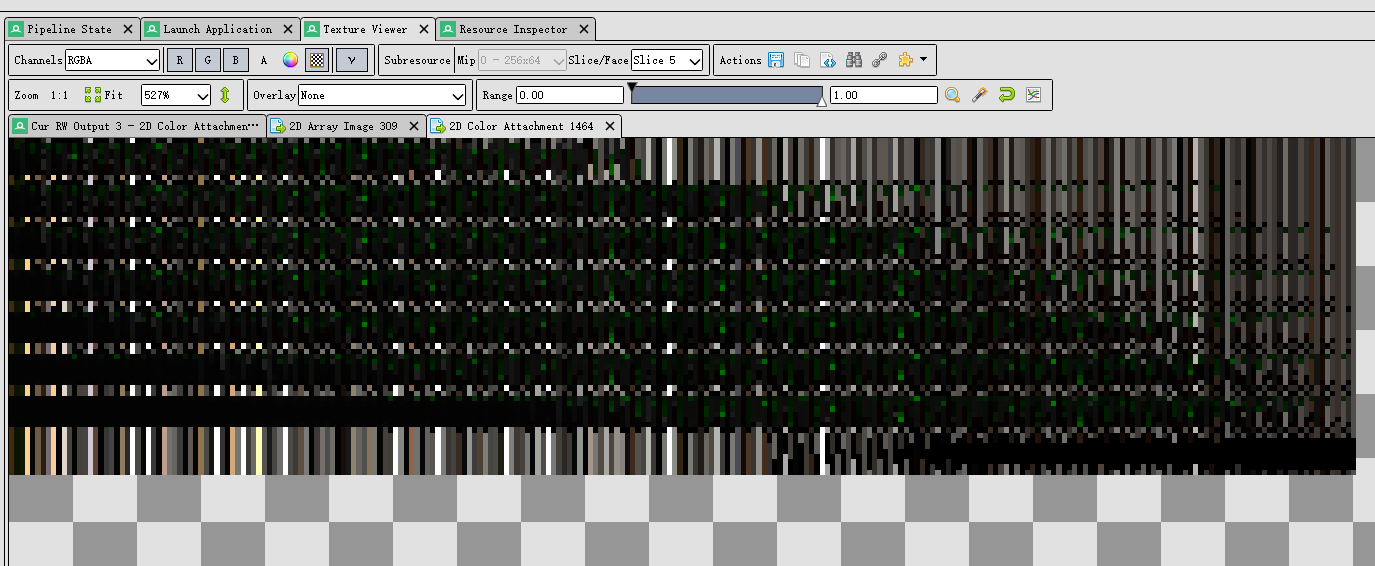

- 混合光照信息:每个探针分配6*6的像素,每个像素代表一个法线方向,通过蒙特卡洛积分来估计法线方向上的Irrandiance(其实和IBL思路一致),并进行时域加权混合,来获得更多的样本?

- 混合距离信息:每个探针分配 14*14的像素,计算每个方向上HitT的期望和平方的期望,时域混合(这两部都采用了八面体映射,另外这两步涉及到采样边界的问题,在像素外扩充一圈,用特定规则填充后存储)类似

,保证在一个探针内采样时,不会采样到别的探针数据

- 光照Pass使用探针:根据着色点位置找到周围8个探针,从8个探针中获取对应法线位置的数据进行加权平均,有3种权重系数

- 如果probe离着色点较远,降低probe的权重(三线性插值系数)

- 如果着色点到probe的方向与表面法线的夹角过大,降低probe的权重(方向系数)

- 如果着色点与probe之间有较大的概率存在遮挡物,降低probe的权重(切比雪夫系数)

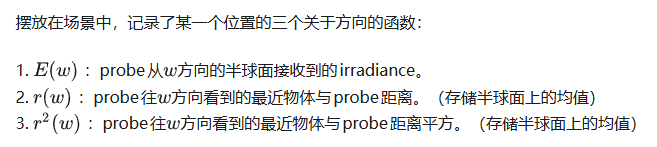

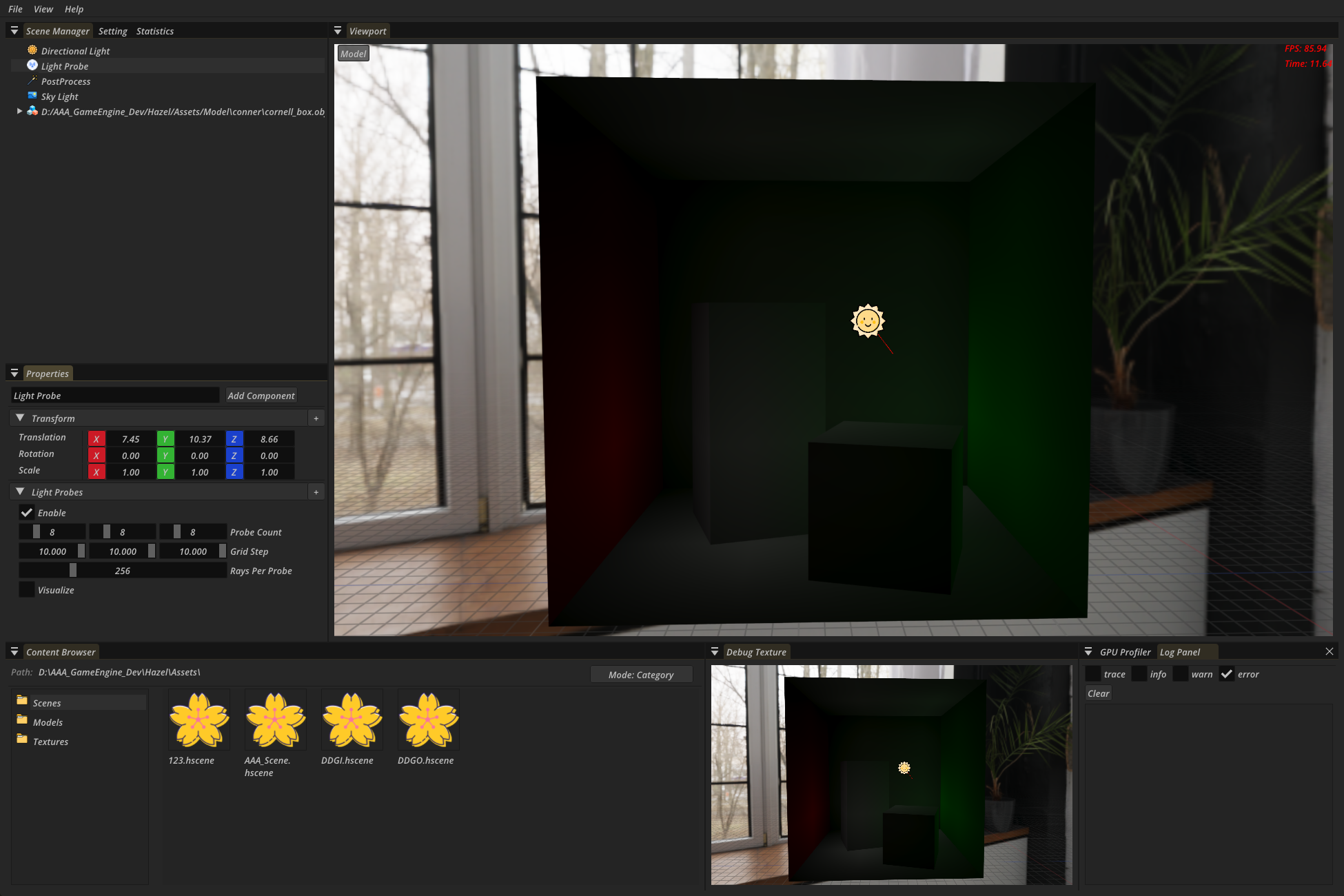

DDGI Probe

DDGIVolume

把探针组组成一个包围盒,定义探针数量和间距,以及DDGI的各种参数,这里实现了一个新的探针组件来表达它,并实现了他的可视化

ProbeTrace

这个阶段利用光追,计算并保存每个探针发射四面八方的光线的Radiance 信息(在每个击中点进行一次光照计算)

- 获取volume的设置,采用y-up方向,作为Image2Darray的layer,所以每层的探针数量为probeCount.x * probeCount.z

|

|

- 执行光追TraceRays(raysPerProbe,probeCountPreLayer,volumeLayerCount)

|

|

-

光追Shader

RayGen中需要为每个探针的每条光线的击中点计算光照和阴影,并且混合历史信息(计算击中点的周围8个探针历史信息的irrandiance,作为击中点的间接光照(只计算漫反射部分))

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105struct Payload { vec3 albedo; float roughness; vec3 worldPosition; float metallic; vec3 normal; float hitT; }; void main(){ uint rayIndex = gl_LaunchIDEXT.x; uint probePlaneIndex = gl_LaunchIDEXT.y; uint planeIndex = gl_LaunchIDEXT.z; uint probeCountPrePlane = DDGIGetProbesPerPlane(volume.probeCount); // x * z(y-up) uint probeIndex = (planeIndex * probeCountPrePlane) + probePlaneIndex; uvec3 probeCoords = DDGIGetProbeCoords(probeIndex,volume); // 探针在探针网格的坐标 vec3 probeWorldPosition = DDGIGetProbeWorldPosition(probeCoords, volume); vec3 rayDirection = normalize(RTXGISphericalFibonacci(rayIndex,volume.raysPerProbe)); // 生成光线方向 // 最终每根光线的计算结果都需要存储, Texture2DArray(x:rayIndex, y:probeIndexInLayer, z:layerIndex) uvec3 outputCoords = DDGIGetRayDataTexelCoords(rayIndex,probeIndex,volume); // 启动射线 traceRayEXT(TLAS, // acceleration structure gl_RayFlagsOpaqueEXT, // rayFlags 控制光线的行为,比如是否忽略背面、是否启用 any-hit、是否可用 conservative tracing 等 0xFF, // cullMask 0xFF → 匹配所有实例 0, // sbtRecordOffset 索引到 Shader Binding Table (SBT) 的起始记录位置 1, // sbtRecordStride SBT 中每条记录的间隔(单位是记录数,不是字节) 0, // missIndex 当光线没有击中任何几何体时,使用 SBT 中 miss shader 的索引 probeWorldPosition.xyz, // ray origin 0, // ray min range rayDirection.xyz, // ray direction MAX_RAY_TRACING_DISTANCE, // TODO:这个应该换成DDGI自己的参数设置 0 // payload (location = 0) payload的位置 ); // Miss,返回天空的Radiance if(payload.hitT == -1.f){ imageStore(out_RAYDATA, ivec3(outputCoords), vec4(payload.albedo, 1e27f)); return; } // 击中背面,参考RTXGI的做法 // Make the hit distance negative to mark a backface hit for blending, probe relocation, and probe classification. // Shorten the hit distance on a backface hit by 80% to decrease the influence of the probe during irradiance sampling. if(payload.hitKind == gl_HitKindBackFacingTriangleEXT){ imageStore(out_RAYDATA, ivec3(outputCoords), vec4(vec3(0), -payload.hitT * 0.2)); return; } ///////////////////////////////////////////// // Directional Light TODO: 点光源和聚光 这里也是通过TraceRays来判断阴影的 ///////////////////////////////////////////// vec3 brdf = (payload.albedo / PI); vec3 lighting = vec3(0.f); DirectionLight dirLight = GetDirectionLight(); // 硬件在找到第一个 hit 后立即停止 | 跳过ClosestHitShader,直接返回rayGen | 所有模型都被视为不透明 const uint rayFlags =gl_RayFlagsTerminateOnFirstHitEXT | gl_RayFlagsSkipClosestHitShaderEXT | gl_RayFlagsOpaqueEXT; // 发射光线计算遮挡 traceRayEXT(TLAS, rayFlags, 0xFF, 0, 1, 1, payload.worldPosition, 0, -dirLight.direction, MAX_RAY_TRACING_DISTANCE, 1 ); float shadowScale = 1.0; if(shadowPayload.hitT != -1.f){ shadowScale = 0.0; } vec3 lightDirection = -normalize(dirLight.direction); float nol = max(dot(payload.normal, lightDirection), 0.f); vec3 dirLighting = nol * dirLight.radiance * shadowScale; lighting += dirLighting; vec3 diffuse = lighting * brdf; ///////////////////////////////////////////// // Indirection Light ///////////////////////////////////////////// vec3 irradiance = vec3(0); float volumeBlendWeight = DDGIGetVolumeBlendWeight(payload.worldPosition, volume); if (volumeBlendWeight > 0){ irradiance = DDGIGetIrrandianceByWorldPosition( payload.worldPosition, payload.normal, volume, ddgi_Irrandiance,ddgi_Distance); } // Perfectly diffuse reflectors don't exist in the real world. // Limit the BRDF albedo to a maximum value to account for the energy loss at each bounce. float maxAlbedo = 0.9f; vec3 radiance = diffuse + ((min(payload.albedo, vec3(maxAlbedo, maxAlbedo, maxAlbedo)) / PI) * irradiance * volumeBlendWeight); // 最终存储rayData radiance(3) + hitT(1) imageStore(out_RAYDATA, ivec3(outputCoords), vec4(Saturate(radiance), payload.hitT)); }closestHit需要获取击中点的模型信息

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38#ifdef RAYCLOSEST_HIT_SHADER layout(location = 0) rayPayloadInEXT Payload payload; hitAttributeEXT vec2 attribs; void main() { uint instanceID = gl_InstanceCustomIndexEXT; // 在构建TLAS时,给每个实例分配的ID uint primitiveID = gl_PrimitiveID; // 击中的三角形索引 vec3 barycentrics = vec3(1.0 - attribs.x - attribs.y, attribs.x, attribs.y); // 收集MeshInfo mat4 model = GetModelMatrix(instanceID); uvec3 triangleIndex = GetTriangleIndex(instanceID, primitiveID); vec4 position = GetTrianglePosition(instanceID,triangleIndex,barycentrics); vec3 meshNormal = GetTriangleMeshNormal(instanceID,triangleIndex,barycentrics); vec4 tangent = GetTriangleTangent(instanceID,triangleIndex,barycentrics); vec3 worldNormal = GetWorldNormal(meshNormal,model); vec4 worldTangent = GetWorldTangent(tangent,model); vec2 texCoord = GetTriangleTexCoord(instanceID, triangleIndex, barycentrics); vec4 worldPos = model * position; MaterialInfo material = GetMaterialInfo(instanceID); vec4 albedo = GetDiffuse(material,texCoord); vec3 normal = GetNormal(material, texCoord, worldNormal, worldTangent); vec4 emission = GetEmission(material,texCoord); albedo += emission; float roughness = GetRoughness(material,texCoord); float metallic = GetMetallic(material, texCoord); payload.worldPosition = worldPos.xyz; payload.normal = normal; payload.roughness = roughness; payload.metallic = metallic; payload.albedo = albedo.rgb; payload.hitT = gl_HitTEXT; payload.hitKind = gl_HitKindEXT; } #endifMiss处理未击中的情况,需要两个,一个处理天空,一个处理阴影判断标记(因为MissShader只支持一个Payload,好像只有RayGen可以定义多个PayLoad,其他都只能用于处理一个PayLoad)

1 2 3 4 5 6 7 8 9 10 11#ifdef RAYMISS_SHADER layout(location = 0) rayPayloadInEXT Payload payload; // rayPayloadInEXT 注意是InEXT layout(set = 1, binding = 3) uniform textureCube skyCube; void main() { vec3 rayDir = normalize(gl_WorldRayDirectionEXT); payload.albedo = texture(samplerCube(skyCube,SAMPLER[0]),rayDir).rgb; payload.hitT = -1; } #endif1 2 3 4 5 6 7 8#ifdef RAYMISS_SHADER layout(location = 1) rayPayloadInEXT ShadowPayLoad shadowPayload; void main() { shadowPayload.hitT = -1; } #endifRayTrace结束后,获得了每个探针的每条光线携带的Radiance信息

另外还会在着色点采样它周围8个探针的光照数据(就是上一帧的记录,也是该着色点的间接光照)这样做相当于得到了采样点的间接光照

ProbeBlend

根据RayData来更新探针信息

IrrandianceBlend

探针理论上需要存储每个方向上的半球积分来得到该位置的Irrandiance,但是存储所有方向不太可能,DDGI采用6*6的像素存储一个探针36个方向上的Irrandiance,使用时进行插值即可,另外使用八面体映射,遍历每个探针,保存一个6 * 6区域,每个区域都被映射到八面体上的一个方向,再把这个方向挪到球面,遍历所有光线与该方向的权重进行混合

注意Texture2DArray的采样的uvw的w不是0-1,用第几层就传递几,这点疏忽了给我整麻了

|

|

|

|

DistanceBlend

对于distance,只有一些地方不一样

|

|

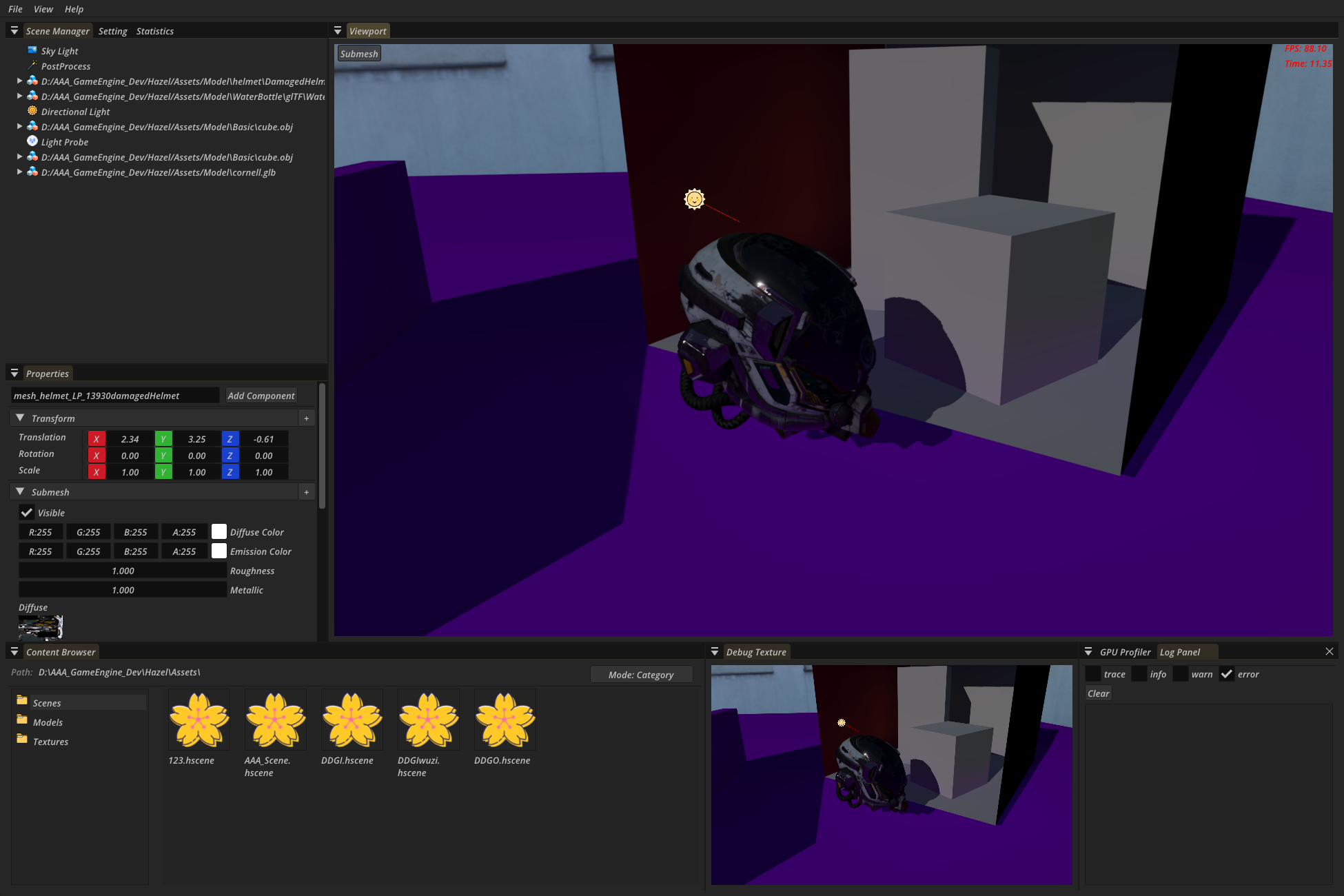

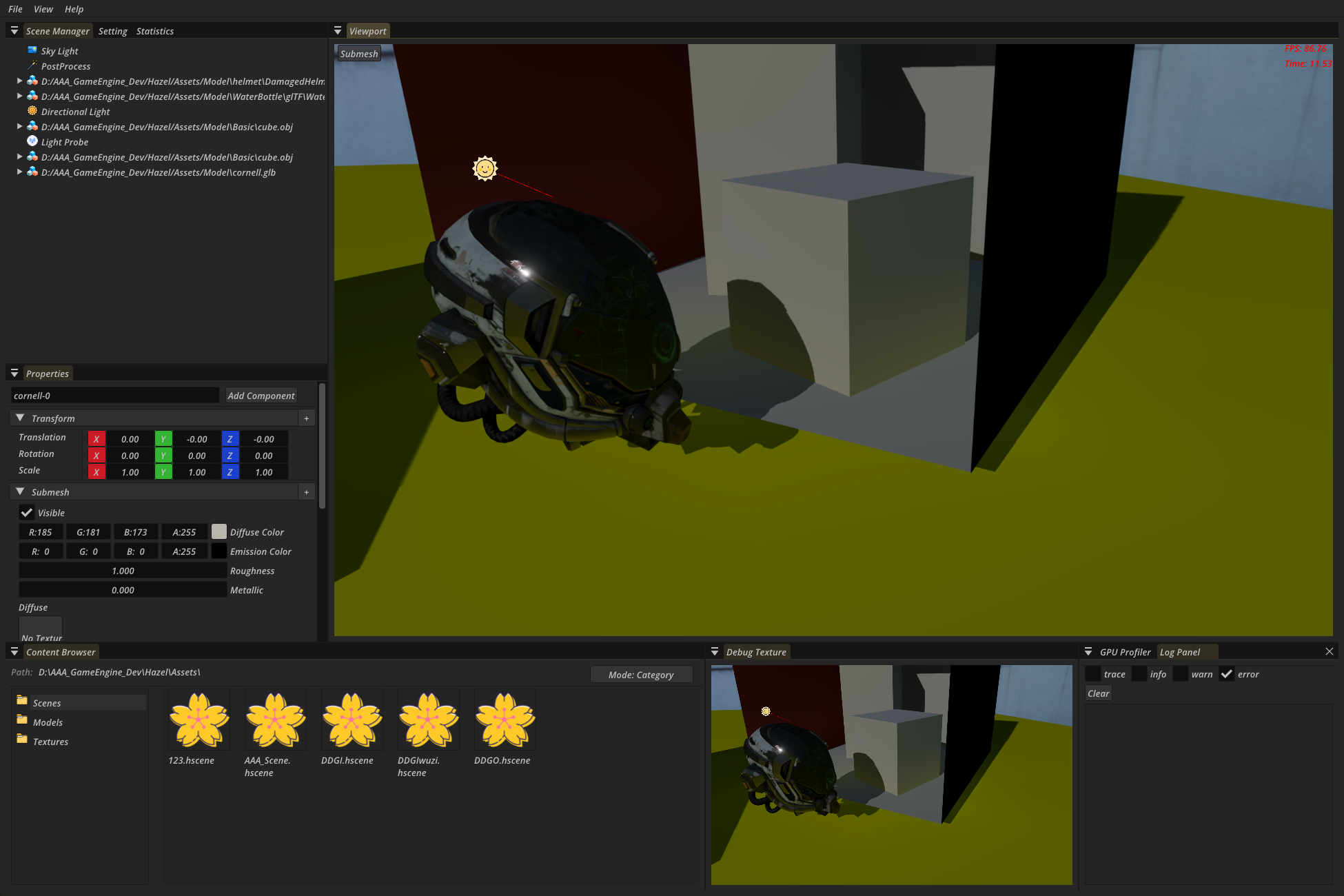

IndirectLight

|

|

下图显示只渲染间接光

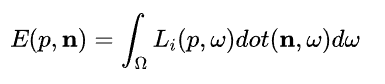

Math

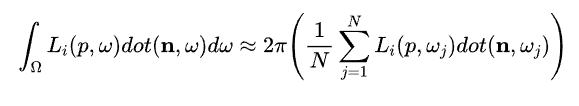

首先回顾Irrandiance是单位半球上各个方向的Radiance的积分(×cos)

均匀分布的蒙特卡洛积分来近似上边这个积分式

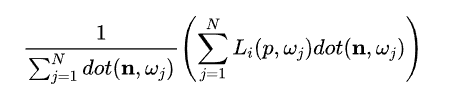

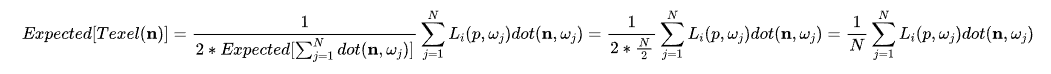

DDGI存储一个Irrandiance时,并不是/N,而是/余弦权重之和,目的是减少方差

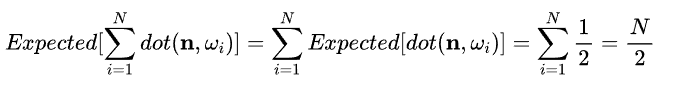

他的期望是N/2,对比蒙特卡洛积分还需要*2

总结

-

第一次实现比较复杂的全局光照效果,还是太逞能,总想抄RTXGI的各种实践Tick,实际根本理解不了。甚至还想着用Y-Up来构建Imager2DArray,导致逻辑十分混乱。大量时间用在了无用的调试Bug上。

-

还有一些DDGI的内容没有实现

- RelocationVolumeProbes:读取一个探针交中背面的次数,如果大于阈值,说明Probe在墙里边,就朝着交中背面的方向偏移,或者交中正面的距离太短了,也进行偏移

- ClassifyVolumeProbes:Classify主要是为了给probe进行分类,对于一些卡在墙中或者不在视野内的probe则不进行计算,以节省开销,当然,更重要的是,这个还能避免计算墙外probe,一定程度上减少漏光

-

总体来看第一次搞大动作比较失败~